This document is part of the comprehensive AI policy guide for employees.

To create a comprehensive policy framework on "Ownership of Outcome" for employees in an organization that aligns with both EU and USA policies, the framework must integrate principles of accountability, transparency, risk management, and ethical responsibility as outlined in the respective regional guidelines.

Policy Purpose

This policy establishes that employees are responsible for owning the outcomes of their work, including decisions, deliverables, and impacts, ensuring accountability, ethical conduct, and alignment with organizational and regulatory standards.

Key Principles-

- Ownership and Accountability: Employees must take full responsibility for the outcomes of their tasks and projects, including positive results and any unintended consequences.

- Transparency and Reporting: Employees should document and report outcomes clearly, enabling traceability and informed decision-making

- Risk Management: Employees must identify, assess, and mitigate risks related to their work outcomes, adhering to organizational risk frameworks.

- Ethical and Legal Compliance: Outcomes must comply with applicable laws, regulations, and ethical standards, including data protection, privacy, and fairness

- Continuous Improvement: Employees are encouraged to learn from outcomes to improve future performance and organizational practices.

Policy Scope

Applies to all employees involved in decision-making, development, deployment, or management of products, services, or processes within the organization.

Roles and Responsibilities

- Employees: Own and be accountable for their work outcomes; proactively manage risks; ensure compliance.

- Managers: Support employees in understanding ownership expectations; facilitate risk management and compliance

- Compliance and Risk Teams: Provide frameworks, training, and oversight to ensure policy adherence.

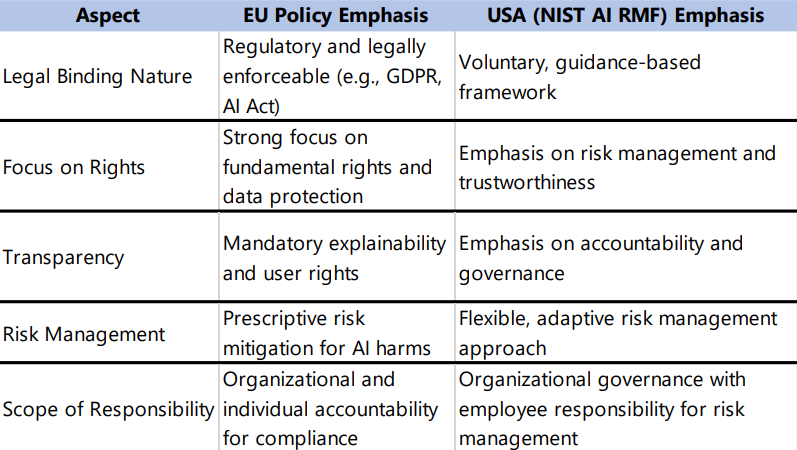

The EU policy framework, particularly under regulations such as the General Data Protection Regulation (GDPR) and the EU AI Act, emphasizes:

- Accountability and Transparency: Organizations and individuals must demonstrate accountability for AI systems and data processing activities, ensuring outcomes are explainable and traceable

- Risk Management: Continuous assessment and mitigation of risks related to AI and data use are mandatory.

- User Rights and Ethical Use: Outcomes must respect fundamental rights, including privacy, non-discrimination, and fairness

The EU approach mandates that outcome ownership includes responsibility for protecting individual rights and ensuring transparency in automated decisions, with strict adherence to data protection principles

The USA policy, as per the NIST AI Risk Management Framework (AI RMF 1.0), highlights:

- Governance and Accountability: Organizations and employees must govern AI risks throughout the lifecycle, ensuring responsible development and deployment.

- Risk Framing and Management: Employees must understand and manage AI risks, including safety, security, fairness, and privacy.

- Human-Centric and Social Responsibility: Emphasizes professional responsibility of employees to consider societal impacts and maintain trustworthiness of AI outcomes.

- Flexibility and Adaptability: The framework is voluntary and adaptable, encouraging organizations to tailor risk management to their context.

The USA policy focuses on embedding risk management and accountability into organizational processes, with employees playing active roles in managing AI system outcomes responsibly.

Employees are the primary owners of the outcomes resulting from their work and decisions. This ownership entails full accountability for the quality, impact, and compliance of outcomes with applicable laws, ethical standards, and organizational policies. Employees must proactively identify and manage risks associated with their outcomes, ensure transparency in reporting, and uphold the principles of fairness, privacy, and social responsibility. This policy aligns with both EU regulatory requirements and the USA's NIST AI Risk Management Framework to foster trustworthy, ethical, and legally compliant organizational practices.

1. Update Governance and IP Policies for AI-Created Output

Redefine internal IP (Intellectual Property) and content liability clauses in contracts to specify that:

- Individuals who initiate, configure, or prompt AI systems are accountable for outcomes.

- AI is a tool, but the human remains the ethical and legal custodian of the result

2. Create a Human-in-the-Loop (HITL) AI Responsibility Model

- Embed checkpoints in workflows (code, content, models, decisions) that require human review, annotation, and validation of AI outputs.

- Assign named individuals who “sign off” on each AI-generated asset (code, content, decision, recommendation, etc.).

3. AI Output Disclosure & Attribution Requirements

- Who created the prompt?

- What AI system was used?

- What parts were edited, reviewed, or accepted as-is?

4. AI Outcome Escalation & Exception Framework

- Bias

- IP violations

- Defamatory or unsafe content

5. Role-Based Training: "AI Accountability Certification

- Ethics of AI-generated content

- Risk and liability of AI misuse

- When and how to intervene or override AI outputs

6. Integrate AI Ownership into Performance & Legal Review

- Who approved the output?

- Was the outcome reviewed, tested, and signed off?

1. Act as the “Responsible Prompt Owner

- The purpose of the AI use.

- Your prompt.

- Whether you accepted the output fully, partially, or rejected it.

2. Validate & Curate Every Output

- Cross-checking facts

- Reviewing bias and tone

- Ensuring it aligns with company policy and compliance standards

3. Log Prompting Decisions in High-Stakes Contexts

- In sensitive areas (e.g., legal, policy, customer communications), keep prompt logs and version histories.

- Be able to explain why you used AI and how you interpreted its result.

4. Use an "Ownership Declaration" for AI Outputs

- In submitted work, use simple footnotes or metadata like: “This content was AI-assisted. Final ownership and edits were made by [Your Name].”

- Especially important for client-facing work, legal docs, research, etc.

5. Report & Reflect on Consequences

- Misinformation

- Harm

- Compliance violations

Immediately report it via internal incident systems — and participate in lessons-learned reviews

.png)