This document is part of the comprehensive AI policy guide for employees.

Ensuring the accuracy and reliability of AI system outputs is critical for Consumer Packaged Goods (CPG) companies that rely on AI for decision making, customer insights, supply chain optimization, and regulatory compliance. Inaccurate or unreliable AI results can lead to poor business decisions, regulatory violations, and loss of consumer trust. This section proposes a tailored framework for managing accuracy and reliability, grounded in the regulatory mandates and best practices outlined in the EU Artificial Intelligence Act (AI Act) and the NIST AI Risk Management Framework (AI RMF)

This document establishes a comprehensive policy framework to ensure the accuracy and reliability of AI system outputs within Consumer-Packaged Goods (CPG) companies. The primary purpose of this policy is to safeguard business decisions, maintain regulatory compliance, and uphold consumer trust by ensuring that AI-driven insights and actions are consistently accurate and dependable.

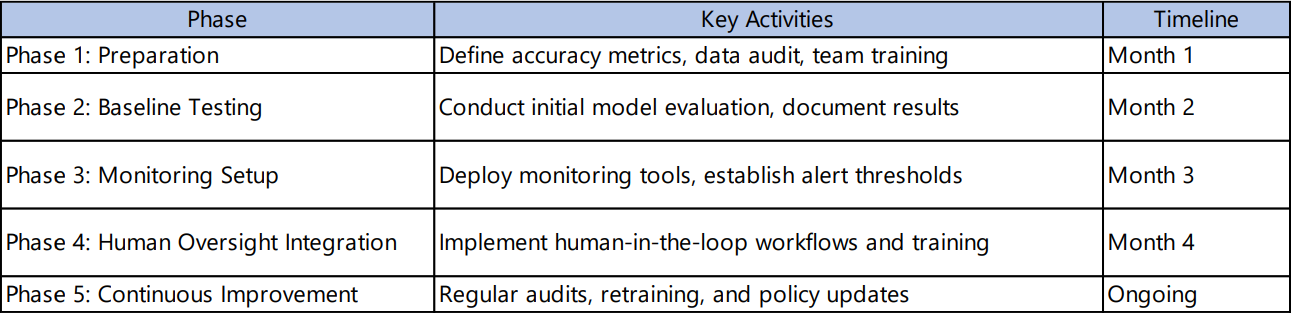

The policy is grounded in leading global standards, including the EU Artificial Intelligence Act (AI Act) and the NIST AI Risk Management Framework (AI RMF). It outlines clear requirements and best practices across five key areas:

1. Data Quality and Representativeness:

Ensures all AI datasets are relevant, comprehensive, and unbiased, with regular audits and robust data governance to minimize errors and bias.

2. Measurement and Documentation of Accuracy:

Mandates the use of quantitative accuracy metrics and thorough documentation of testing methodologies, datasets, and results to support transparency and accountability.

3. Robustness and Generalizability:

Requires AI models to perform reliably across diverse and changing real-world conditions, with stress testing and scenario analysis to validate their resilience.

4. Human Oversight and Transparency:

Incorporates human-in-the-loop mechanisms for critical decisions and emphasizes clear communication of AI system capabilities and limitations.

5. Risk Management and Continuous Monitoring:

Establishes ongoing monitoring, feedback loops, and periodic reviews to detect and address accuracy drift or unexpected outputs throughout the AI lifecycle.

By implementing this policy, CPG companies can proactively manage the risks associated with AI, ensure compliance with evolving regulations, and foster trust among consumers and stakeholders. The framework supports ethical AI deployment, continuous improvement, and business integrity in a rapidly advancing technological landscape.

Policy Reference: The EU AI Act requires that “training, validation, and testing data sets are relevant, representative, free of errors and complete, taking into account the intended purpose of the system” (AI Act, Article 10, Section 2).

Application: Ensure all datasets used in AI development are comprehensive and representative of realworld conditions to minimize bias and error. Employ standards such as ISO/IEC 52592 for assessing data accuracy, completeness, and consistency.

Practice: Regularly audit datasets for errors or gaps and update them to reflect current market and consumer conditions.

How to create Clean, Representative, and Unbiased Data?

To ensure datasets are clean, representative, and unbiased, employ robust data collection and processing techniques such as:

- Sampling Methods: Use stratified, random, or systematic sampling to capture diverse and representative subsets of the population, minimizing selection bias and ensuring the dataset reflects real-world variability.

- Anonymization and De-identification: Remove or mask sensitive personal information (e.g., names, addresses, race, gender, age) when such attributes are not relevant to the AI system’s purpose. Techniques include data omission, pseudonymization, aggregation, and noise addition to protect privacy while preserving data utility.

- Bias Mitigation: Regularly audit datasets to identify and correct imbalances or underrepresented groups. Remove sensitive attributes like race, gender, and age where they do not contribute to the model’s function to prevent discriminatory outcomes.

- Data Governance Practices: Implement strict data management policies covering data acquisition, labeling, storage, and filtration to maintain data integrity and traceability throughout the AI system lifecycle.

- Data Validation and Verification: Implement automated and manual validation processes to detect anomalies, inconsistencies, or inaccuracies in the data. Techniques such as cross-validation, outlier detection, and consistency checks help ensure the dataset remains reliable and aligned with the intended use case.

These practices align with the EU AI Act’s emphasis on data relevance, representativeness, and error-free quality, while also addressing ethical considerations related to privacy and fairness.

In the Artificial Intelligence Risk Management Framework (AI RMF 1.0), under section on "Valid and Reliable" under "AI Risks and Trustworthiness". In this section, it defines accuracy and discusses related concepts:

- Accuracy Definition: "Accuracy is defined by ISO/IEC TS 5723:2022 as “closeness of results of observations, computations, or estimates to the true values or the values accepted as being true."

- Importance of External Validity and Representative Test Sets: The document further states that "Measures of accuracy should consider computational-centric measures (e.g., false positive and false negative rates), human-AI teaming, and demonstrate external validity (generalizable beyond the training conditions)." It also emphasizes that "Accuracy measurements should always be paired with clearly defined and realistic test sets – that are representative of conditions of expected use – and details about test methodology; these should be included in associated documentation."

Examples of Associated Documentation

1. Test Dataset Description: Summary of the data source, sample size, and how the data reflects real-world use.

2. Test Methodology Report: Outline of evaluation procedures, chosen accuracy metrics (e.g., precision, recall), and why they were selected.

3. Accuracy Results Summary: Tables or charts showing accuracy scores, error rates, and key findings.

4. External Validity Assessment: Short notes on how well the model performs on new or different data compared to the training set

5. Version Control Log: Simple record of dataset and model versions used during testing.

6. Explainability and Limitations Note: Brief explanation of how the model makes decisions and any known limitations or edge cases

Application: Implement quantitative metrics such as false positive/negative rates, precision, recall, and confidence scores to evaluate AI outputs. Document test methodologies, datasets, and results thoroughly to support transparency and accountability.

Practice: Disaggregate accuracy metrics across different customer segments or product categories to identify and address disparities

Policy Reference: Robustness is defined as the “ability of a system to maintain its level of performance under a variety of circumstances” (ISO/IEC TS 5723:2022, cited by NIST AI RMF). The EU AI Act also emphasizes robustness as a key requirement for high risk AI systems.

Application: Design AI models to perform reliably across diverse conditions and data variations typical in the CPG sector, such as seasonal demand shifts or supply chain disruptions.

Examples Illustrating the Importance of Robustness and Compliance Implications in EU act

1. Supply Chain Prediction Errors:

AI systems forecasting supply chain disruptions must be robust to diverse and unforeseen conditions. A failure to anticipate critical shortages can cause production delays or missed contractual obligations. Such failures may violate Article 9 (Risk Management System), which requires providers to identify and mitigate risks throughout the AI lifecycle, and Article 10 (Data and Data Governance), mandating relevant, representative, and error-free data. Additionally, inaccurate predictions causing environmental harm or consumer detriment breach the Act’s fundamental rights protections (Recitals 1, 4, 7, 8). Non-compliance can lead to regulatory penalties and legal liability.

2. Demand Forecasting under Seasonal Variations:

Incorrect demand predictions leading to overstocking or stockouts can violate Article 16 (Compliance with Requirements), which requires AI systems to meet all mandated standards, including accuracy and robustness. Overstocking may breach environmental protection principles (Recital 4), while stockouts impact consumer protection rights (Recital 8).

3. Quality Control in Manufacturing:

AI systems that fail to maintain performance under changing conditions risk allowing defective products to reach consumers, violating Article 9 (Risk Management System) and Article 11 (Technical Documentation), which requires thorough documentation of AI system performance and limitations. This also breaches product safety regulations and fundamental rights protections emphasized by the Act (Recital 8).

4. Supplier Compliance Monitoring:

AI tools assessing supplier adherence to labor or environmental standards must reliably detect violations. Failure to do so risks breaching Article 9 (Risk Management System) and Article 16 (Compliance with Requirements), exposing companies to reputational damage and regulatory penalties under the Act’s ethical sourcing and fundamental rights provisions (Recital 9)

Practice: Conduct stress testing and scenario analysis to validate model performance under edge cases and unforeseen conditions.

- Policy Reference: The EU AI Act mandates “human oversight” to ensure AI decisions can be reviewed and corrected (AI Act, Article 14). Transparency about AI system capabilities and limitations is also required to inform users and stakeholders.

- Application: Incorporate human in the loop mechanisms for critical decisions, especially those affecting consumers or regulatory compliance. Provide clear documentation and user guidance on AI system accuracy and limitations

- Practice: Use confidence scores or reliability indicators alongside AI outputs and label AI generated content clearly.

- Policy Reference: NIST AI RMF’s “Measure” and “Manage” functions emphasize ongoing risk assessment, monitoring, and mitigation to maintain accuracy and reliability throughout the AI lifecycle.

- Application: Establish continuous monitoring systems to detect accuracy drift, unexpected outputs (“hallucinations”), or degradation in model performance. Conduct periodic reviews and recalibrations.

- Practice: Implement automated alerts for anomalies and integrate feedback loops for user reported errors.

1. Data Quality and Representativeness

Action steps:

- Conduct initial and periodic audits of training, validation, and test datasets to verify relevance, representativeness, and completeness.

- Use data profiling tools to detect missing values, outliers, and inconsistencies.

- Integrate diverse data sources (e.g., sales, supply chain, customer feedback) to enhance representativeness.

- Document data provenance and preprocessing steps to maintain traceability.

Tools & Techniques:

- Data quality dashboards, statistical sampling, and bias detection algorithms

- ISO/IEC standards for data quality assessment.

Responsible Roles: Data engineers, data scientists, AI model owners, and compliance officers.

2. Measurement and Documentation of Accuracy

Action Steps:

- Define key accuracy metrics relevant to the AI use case (e.g., precision, recall, F1 score, RMSE).

- Establish baseline performance targets aligned with business objectives and regulatory expectations.

- Implement automated testing pipelines to measure accuracy on holdout datasets regularly.

- Maintain detailed records of test datasets, evaluation methods, and results for audits.

Tools & Techniques:

- Model evaluation libraries (e.g., scikit-learn, TensorFlow Model Analysis).

- Version control for datasets and model artifacts.

Responsible Roles: AI developers, quality assurance teams, and audit personnel

3. Robustness and Generalizability

Action Steps:

- Perform stress testing using edge cases, adversarial examples, and simulated scenarios relevant to CPG operations (e.g., supply chain disruptions).

- Validate model performance across different demographic segments and product categories

- Regularly retrain models with updated data to adapt to changing conditions.

Tools & Techniques: Scenario simulation platforms, adversarial testing frameworks.

Responsible Roles: AI researchers, risk managers, and domain experts.

4. Human Oversight and Transparency

Action Steps:

- Design human-in-the-loop workflows for critical AI decisions (e.g., product recalls, marketing campaigns).

- Provide clear explanations of AI outputs, including confidence scores and known limitations.

- Train users on interpreting AI results and escalation protocols for anomalies.

Tools & Techniques: Explainable AI (XAI) tools, user interface design for transparency.

Responsible Roles: Product managers, compliance officers, and end-user trainers

5. Risk Management and Continuous Monitoring

- Deploy monitoring systems to track accuracy drift, data distribution changes, and unexpected outputs in real time.

- Set thresholds for automated alerts and trigger investigations when exceeded.

- Schedule periodic model audits and recalibrations based on monitoring data

Tools & Techniques: AI monitoring platforms (e.g., Inspeq, Fiddler AI), anomaly detection algorithms

Responsible Roles: AI operations teams, risk management, and compliance.

.png)